ai reality check

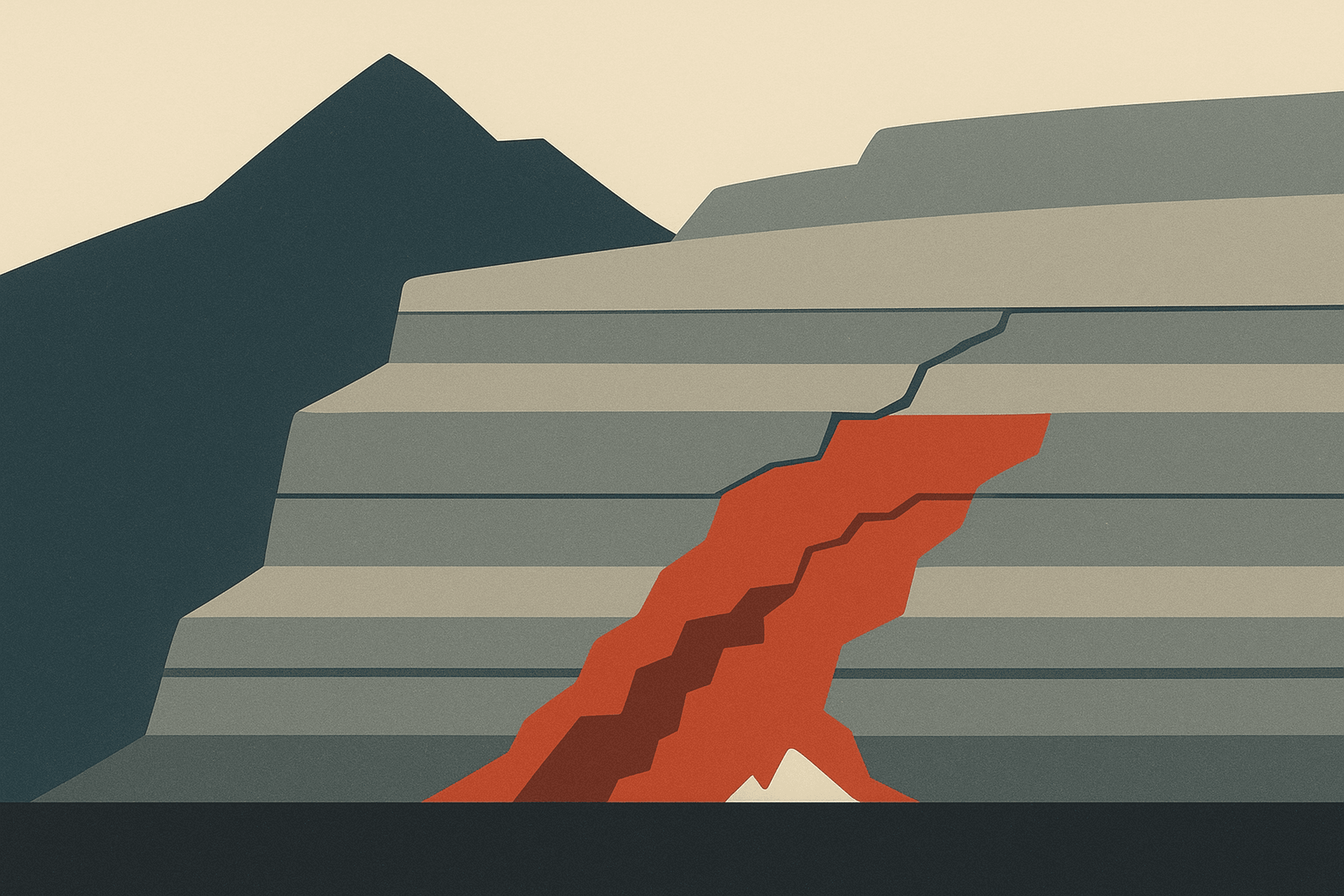

LLM-Generated Code Is Creating a New Kind of Technical Debt

13 min read

Imran Gardezi

66% of developers say their biggest AI frustration is code that's almost right, but not quite. And here's the thing, 'almost right' is more expensive than...

Written by Imran Gardezi at Modh.

Published January 11, 2026.

13 minute read.

Topics: llm code tech debt, llm-generated code is creating a new kind of technical debt, technical debt that, team understands it.

66% of developers say their number one frustration with AI coding tools is code that's "almost right, but not quite."

Stack Overflow's 2024 Developer Survey. Over 65,000 respondents. Worldwide.

And nobody's saying the quiet part out loud.

"Almost right" is more expensive than "completely wrong."

Completely wrong gets caught. Tests fail. Build breaks. Someone says "this doesn't work" and you throw it away. The cost is minutes, maybe an hour. You move on.

Almost right? Almost right passes code review. Almost right ships to production. Almost right sits in your codebase for six months before anyone realizes it's wrong. It looks correct at a glance. It handles the happy path. It even has comments explaining what it does. But buried somewhere in the implementation is an assumption that doesn't hold, a pattern that doesn't match your system, or a shortcut that trades clarity for brevity.

And by then? The cost to fix it has compounded into something nobody budgeted for. Other code has been built on top of it. Dependencies have formed. Team members have adjusted their mental models around the flawed implementation. Fixing it now means untangling months of work that assumed the foundation was solid.

We manage six client codebases right now. Every single one of them is generating this debt. I've been shipping production systems for 15 years. This is a new pattern. And it's everywhere.

Today I'm going to show you the 5 specific patterns of AI-generated debt, why they're harder to catch than traditional debt, and the 3-question code review framework we use on every AI-generated PR.

Five patterns. One principle. One framework. Let's go.

The PR That Looked Perfect

Client project. Mid-sized startup. Good engineers. They'd adopted AI coding tools across the team: Copilot, Cursor, the works. Velocity was up. PRs were flowing. Everyone was happy.

I open a pull request. Clean code. Tests passing. TypeScript. Well-structured. Looks great.

Then I start reading the actual lines.

eslint-disable-next-line no-explicit-any.

Then another one.

eslint-disable-next-line no-unused-vars.

Then another.

Eleven eslint-disable comments. In one file.

And this wasn't an outlier. I searched the rest of the codebase. Over 200 eslint-disable comments across the project. Most added in the last three months, since the team started using AI tools. The pattern was unmistakable. Before AI adoption: a handful of justified suppressions with explanatory comments. After AI adoption: hundreds of suppressions scattered across the codebase like band-aids on a patient nobody had actually examined.

The AI hadn't fixed the TypeScript errors. It had suppressed them. Every single one. The type system was screaming "this is wrong" and the AI's solution was to put tape over the warning light.

And here's the brutal part. This PR had been approved. By a human. A senior engineer looked at this, saw green checks, and hit merge. Not because they were lazy. Because the code looked right. It passed every automated gate. The suppressions were hidden in plain sight, buried between lines of otherwise clean, well-structured code that didn't raise any visual alarms during a quick review.

Two weeks later, a production bug traced back to one of those suppressed type errors. The type system tried to warn us. We told it to shut up. And the cost wasn't just the bug itself. It was the trust erosion. The team had to go back through all 200 suppressions, evaluate each one, and determine which were hiding real problems. That audit took a week of engineering time that should have gone toward shipping features.

The Broken Auth Flow

True story. Client had an OAuth PKCE authentication flow. For anyone who doesn't know, PKCE is how your app securely proves it's allowed to log users in. It's a multi-step handshake between your app and the auth provider, involving code verifiers, code challenges, and token exchanges. Get any step wrong and authentication either fails silently or creates a security vulnerability.

The AI generated the entire flow. It looked correct. Variable names made sense. Steps were in the right order. It even had comments explaining what each step did. A reviewer scanning the PR would see a textbook implementation.

One problem. The token exchange assumed localStorage would always be available for the code verifier. Works in Chrome. Works in Firefox. Fails silently in Safari private browsing, which is how 20% of their users logged in. The AI had generated the most common implementation pattern from its training data, and that pattern happens to break in one of the most common browsing configurations on the internet.

Two days to reproduce the bug. Two hours to fix it. The disparity tells the whole story. The fix was straightforward once someone understood the system well enough to identify the root cause. But nobody on the team had that understanding, because the AI had written the entire flow and the review process hadn't required anyone to deeply comprehend it.

"When AI writes code nobody understands, you haven't saved time. You've borrowed it. With interest."

And here's the uncomfortable truth: some code is too critical for AI generation without deep human comprehension. Auth flows. Payment processing. Data migrations. These are systems where a subtle bug doesn't just cause a bad user experience. It causes security breaches, financial losses, or data corruption. Some code needs to be written, or at minimum deeply understood, by someone who can reason about every edge case.

This is the eslint-disable pattern from the story I just told you. The AI encounters a TypeScript error. The correct fix requires understanding the type system, the data flow, why that type exists, and what contract it's enforcing between different parts of the application.

The fast fix? Suppress the warning. Problem gone. Warning gone. Error still there, hidden behind a comment. The AI isn't being malicious. It's optimizing for the immediate goal: make the code compile. And suppressing a warning is the shortest path to compilation.

I've seen files with 15, 20 suppress comments. Each one is a lie. Each one says "this is fine" when the type system is saying "this is wrong." Think of your type system as a team of experienced engineers whispering warnings about every piece of code. Each suppression is the equivalent of telling one of those engineers to shut up and sit down. Do it enough times and you've silenced every safety mechanism your language provides.

And they compound. The next AI-generated code references the broken types. Suppresses the new errors too. Layer on layer until the type system is meaningless. You're writing TypeScript but getting JavaScript-level safety guarantees. At that point, you've paid the complexity cost of a typed language while getting none of the benefits.

"Your type system is your immune system. AI is teaching your codebase to suppress its own immune response."

The Invisible Growth

Your codebase has a utility function. Formats dates. Used in 47 places. Handles edge cases. Tested. Works.

The AI doesn't know it exists.

So when a developer asks AI to build a new feature that needs date formatting, the AI writes a new date formatting function. Different name. Slightly different implementation. Same purpose. The developer glances at it, sees that it works, and ships it. Now your codebase has two date formatters. Neither is aware of the other. And the next time someone needs date formatting, the AI might generate a third.

Now you have two. Then three. Then five.

Same with type definitions. Your codebase has a User type. The AI creates UserData. Then UserInfo. Then UserResponse. Four types representing the same thing, with slightly different field names and slightly different optional/required configurations. Engineers start importing whichever one their AI suggested. Refactoring becomes a nightmare because changing one User type doesn't update the other three.

And it generates exports nobody calls. Functions nobody references. Constants nobody imports. Why? Because AI generates "complete" modules based on training data. Not based on your codebase. It's mimicking patterns from millions of repositories, and those patterns include utility functions that made sense in the original context but serve no purpose in yours.

New engineers join the team. They see these exports. They think they're used somewhere. They're afraid to remove them. They build around them. Now the dead code is alive, and entangled. What started as an unused function becomes a dependency that someone built on because they assumed it existed for a reason.

"Your codebase is growing horizontally. Not because you're adding features. Because you're adding redundancy."

I've rebuilt 12 projects like this. You know when the founders call me? When the next feature costs three times what it should. When the simple change takes two weeks instead of two days. That's the compound interest on code nobody needed in the first place.

Now here's the one that keeps me up at night.

Every good team has conventions. How you name things. Where state lives. How errors are handled. How files are structured. These conventions aren't arbitrary style preferences. They're the patterns that let a developer open any file in the codebase and immediately understand the architecture, because every file follows the same logic. Conventions are what make a codebase navigable by humans.

AI doesn't know your conventions. It knows the internet's conventions. And the internet has a lot of opinions.

We had a client codebase where half the files used PascalCase for components and half used camelCase. Three different error handling patterns coexisted: try/catch with custom error classes, result types, and raw promise rejection. The AI had generated each file using whatever convention it felt like that day, pulling from different training examples for each prompt. The result was a codebase that looked like it had been built by fifteen different teams who had never spoken to each other.

And yes, newer tools like Cursor with codebase indexing are getting better at reading your context. But "better" isn't "reliable." The question is still the same: did the output actually follow your conventions, or did it approximate them?

One file that doesn't match is a quirk.

The Principle

Five patterns. Same root cause every time: the AI generates plausible code, not correct code. Plausible means it looks like it belongs. It compiles. It passes basic tests. It follows common patterns from the training data. Correct means it works in your specific system, follows your specific conventions, reuses your specific utilities, and can be maintained by your specific team.

And plausible is the most dangerous word in software engineering.

"The most expensive code your AI writes is the code that almost works."

Completely wrong code is cheap. It fails fast. Tests catch it. You throw it away. Cost: minutes.

Almost-right code is devastating. It passes tests. It ships. It sits in production. Other code gets built on top of it. Dependencies form. Teams adjust their mental model around it. Engineers stop questioning it because it's been "working" for months.

Then it breaks. Not today. Not next week. Three months from now. Under load. In an edge case. During a critical demo. And the cost isn't fixing one file. It's untangling three months of code built on a flawed foundation. It's the architectural assumptions that spread outward from the original flaw, infecting every module that touched it.

Stack Overflow's same 2024 survey. Only 29% of developers trust AI-generated code. Down from 40% the year before. Trust is dropping because teams are learning this the hard way. They're experiencing the gap between "it works in testing" and "it works in production, at scale, under real conditions, six months later."

"Technical debt is financial debt. You're paying interest whether you see the invoice or not."

Every feature that takes longer than it should? That's the interest. Every bug that keeps reappearing? That's the interest. Every senior engineer who says "I don't understand this codebase anymore"? That's the interest. And unlike financial debt, you can't declare bankruptcy and start over. The codebase is the business. You have to fix it while it's running.

Review times don't go up. They go down. Because reviewers aren't reading every line trying to find something wrong. They're asking three specific questions with clear pass/fail criteria. The structure actually makes reviews faster because there's no ambiguity about what you're looking for.

One client team went from 3-day review turnaround to same-day. Not because they reviewed less. Because they knew exactly what to look for.

New engineers onboard faster. Because the codebase has one way of doing things. Not five. When every file follows the same patterns, a new team member who learns one module can navigate every module.

Debugging goes from days to hours. Because the code was written by someone who understood it. Not generated by a model that approximated it.

And if you're the founder or executive hiring a dev team, this affects you too. Ask your engineers: "Show me the last 5 PRs where AI generated the code. Can you explain each one?" If they can't, you're accumulating debt you can't see on any dashboard.

The teams that shipped fastest at places like Shopify weren't the ones that wrote the most code. They were the ones whose code was the most consistent. The most predictable. The most maintainable. Every single time.

"AI makes disciplined teams unstoppable. AI makes undisciplined teams dangerous. Same tool. Different foundations."

The 3-Question Framework

You don't stop using AI. That's not the answer.

Here's a military term for you: force multiplier. Night vision goggles don't give you more soldiers. They make the soldiers you have more effective. But only if those soldiers know how to fight. Night vision on someone who's never held a weapon? Useless. Dangerous.

AI is the same. It's a force multiplier. But you need the discipline to aim it. Without that discipline, you're not multiplying force. You're multiplying chaos.

The answer is reviewing AI code the way you'd review code from a talented but brand-new contractor who's never seen your codebase before. Because that's exactly what AI is. It's brilliant at generating code that looks professional. It has zero context about your specific system, your team's patterns, or the historical decisions that shaped your architecture. Treat it accordingly.

We built a 3-question framework for every AI-generated PR. Three questions. Takes 30 seconds. Catches most of the patterns I just showed you.

Question one. Does it reuse?

Before this code was written, did something similar already exist in the codebase? Is there an existing utility, type definition, or pattern that does the same thing? If yes, delete the new code and use the existing one. This isn't about being pedantic. Every duplicate function is a fork in your codebase's future. Fix it at the PR level and it costs you 30 seconds. Fix it six months later and it costs you a week of refactoring.

This catches phantom code. One question. Duplicates and dead exports gone.

Question two. Does it follow convention?

Does the naming match? Does the file structure match? Does the error handling match? Does this code look like it belongs in this codebase, or does it look like it was dropped in from a different project? Check the imports. Check how errors are thrown and caught. Check where state is managed. Every deviation from convention is a friction point for every future developer who touches this code. It forces them to switch mental models mid-task, which slows them down and increases the chance of introducing new bugs.

If it doesn't match, refactor it before it ships. This catches convention violations.

Question three. Can the developer explain it without reading the AI's comments?

Not "does it work." Not "can they read the AI's explanation back to me." Can the developer who submitted this PR walk through the logic, line by line, with the AI's comments deleted?

If they're reading documentation, that's not understanding. If they can't explain why the PKCE flow works this way without the AI's annotations, the code isn't ready. Send it back. This isn't about gatekeeping or making engineers feel bad. It's about ensuring that the person responsible for maintaining this code in production can actually maintain it.

This catches broken flows and suppressed errors. If the developer can't explain it, they can't maintain it. And unmaintainable code is debt.

Let me show you how fast this is. Last week. AI-generated PR lands. Question one: does it reuse? I search the codebase. We already have a date formatter. Delete the new one. Question two: follow conventions? Error handling doesn't match our patterns. Refactor. Question three: can the dev walk me through it? Yes. Ship it. Three minutes. Done.

The Close

Four patterns. One principle. Three questions.

Phantom code. Suppressed errors. Broken flows nobody can debug. Convention violations.

The principle: the most expensive code is the code that almost works.

The framework: Does it reuse? Does it follow convention? Can the developer explain it without the AI's help?

If your team is using AI coding tools, and they are, pick ten recent PRs. Ask those three questions. What you find will tell you exactly where the debt is hiding. Most teams are surprised by the results. Not because the debt is dramatic, but because it's pervasive. Small compromises in every PR that add up to a codebase that's slowly drifting away from consistency and comprehension.

And if you want help diagnosing what's already accumulated in your codebase, that's what a Strategy Session is for. We'll go through it, identify where the debt is hiding, and give you a plan to stop the compound interest.

But even if you never talk to me, take those three questions into your next code review.

"The AI tools aren't going away. The debt doesn't have to be inevitable."

Your next PR review is, what, a few hours away? Three questions. That's all it takes.

And if you want to understand the mindset that creates this debt in the first place, I made a whole video on Vibe Coding. That video is about the mindset. This one is about the codebase. Same problem, different layer. Watch it next.

Key Takeaways

-

"Almost right" AI code is far more expensive than "completely wrong" code because it passes every quality gate your team has in place. Wrong code fails fast and gets thrown away in minutes. Almost-right code ships to production, gets built upon for months, and compounds into debt that costs orders of magnitude more to untangle than it would have cost to catch at the PR level.

-

AI generates plausible code, not correct code, and the distinction is critical. Plausible means it compiles, follows common patterns, and looks professional. Correct means it works in your specific system, reuses your existing utilities, follows your team's conventions, and can be maintained by the engineers who ship it. Every AI-generated PR should be reviewed with this distinction in mind.

-

The five patterns of AI-generated debt are interconnected and compound on each other. Broken auth flows that nobody can debug, suppressed type errors masquerading as clean code, duplicate utility functions that fragment your codebase, dead exports that confuse new engineers, and convention violations that erode codebase consistency. Catching any one of them reduces the severity of the others.

-

The 3-question code review framework takes 30 seconds per PR and catches the majority of AI-generated debt. "Does it reuse?" catches phantom code and duplicates. "Does it follow convention?" catches style and pattern violations. "Can the developer explain it without AI assistance?" catches comprehension gaps and suppressed errors. Teams that implement this framework report faster review turnaround, not slower.

-

Developer trust in AI-generated code is declining rapidly, dropping from 40% to 29% in a single year according to Stack Overflow's survey of 65,000+ developers. This isn't pessimism. It's experience. Teams are discovering the gap between "code that works in testing" and "code that works in production at scale six months later." The teams that acknowledge this gap and build review processes around it will outperform those that chase velocity metrics while ignoring maintenance costs.

Frequently Asked Questions

What makes AI-generated technical debt different from traditional technical debt?

Traditional technical debt is usually visible. You can see the messy code, the missing tests, the outdated dependencies. Linters flag it. CI pipelines catch it. Developers grumble about it in standups. AI-generated technical debt is different because it hides behind clean-looking code that passes every automated quality gate. The five patterns (broken flows, suppressed errors, duplicate logic, dead exports, convention violations) all share one characteristic: they look correct on the surface. A reviewer scanning the PR sees well-structured code with passing tests, and the subtle problems are buried in assumptions that only become visible under production conditions months later.

How much does AI-generated code debt actually cost companies?

The direct cost varies by codebase, but the pattern is consistent. Stripe's Developer Coefficient report found that companies globally lose an estimated $85 billion per year to technical debt overall. AI-generated debt compounds faster because it accumulates at the speed of AI code generation, which is dramatically faster than human code generation. In practical terms, I've seen teams where incident resolution on AI-written modules takes 3-4x longer than on human-written modules, features that should take days take weeks because of duplicate utilities and inconsistent conventions, and senior engineers spend more time untangling AI-generated code than they save by generating it. The compound interest is real.

Should engineering teams stop using AI coding tools to avoid this debt?

No. Stopping is the wrong answer. AI coding tools are genuine force multipliers when paired with engineering discipline. The right approach is to change how you review AI-generated code. Treat it like code from a talented contractor who has never seen your codebase: review it for convention adherence, check for duplicates against existing utilities, and verify that the submitting engineer can explain the code without AI assistance. Teams that implement structured review processes for AI code actually see faster review turnaround times because reviewers have clear, specific criteria instead of open-ended "does this look right?" scanning.

What are the warning signs that AI-generated debt is accumulating in my codebase?

There are several early indicators to watch for. A rising count of eslint-disable or type suppression comments is the most obvious signal. Multiple utility functions or type definitions that serve the same purpose (like User, UserData, UserInfo, and UserResponse all representing the same entity) indicate phantom code accumulation. Inconsistent naming conventions, error handling patterns, or file structures across recent files suggest convention drift. And if your incident resolution time is trending upward while your feature velocity is also trending upward, that's the classic signature of AI-generated debt: speed on the way in, slowness on the way out.

How do I implement the 3-question framework on an existing team that's already using AI tools heavily?

Start with a single sprint. Pick ten recent AI-generated PRs and retroactively apply the three questions: Does it reuse existing code? Does it follow our conventions? Can the author explain it without AI assistance? Document what you find. This baseline audit usually reveals enough concrete examples to get buy-in from the team. Then make the three questions part of your PR template going forward. Most teams find that the framework actually speeds up reviews because it replaces vague "does this look good?" evaluation with specific, answerable criteria. The key is framing it as a quality investment, not a speed tax. Show the team the math: 30 seconds of structured review now versus days of debugging later.